TL;DR

- Google focuses on meaning rather than wording. So, AI rewrites look like duplicates.

- They lack real experience, expertise, authority, and trust (E-E-A-T).

- Without strong site signals or backlinks, they can’t compete with originals.

- Users leave quickly when content feels generic, hurting rankings.

- They add no new insight, so Google has no reason to rank them.

Here is a question we hear often from business owners, marketing leads, and even some SEO professionals: “The competitor is ranking with that blog. Can we just find their top blogs and have AI rewrite them? We will be the experts now, right?”

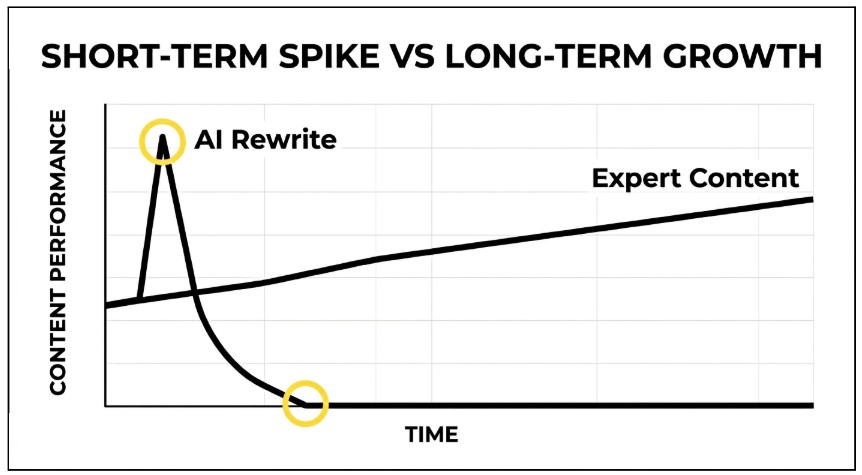

It sounds logical. It feels efficient. And if you have tried it, you may have even seen a brief flicker of early traction, which is enough to think it is working.

It is not.

In the past 3 years of auditing and building content strategies for B2B and SaaS websites, we have reviewed more than 1000 blog pages that relied on AI rewriting or “skyscraper” techniques. The pattern is consistent: these pages may gain impressions in the first 2–4 weeks, but fewer than 10% sustain top-10 rankings beyond the first 60 days.

In this blog post, we’ll explain the technical, algorithmic, and human-behavioural aspects for exactly why. All drawn from Google’s own guidelines, algorithm update data, and patents that most content teams have never read.

| Google’s March 2024 update removed 45% of low-quality, unoriginal content from its search results. Hundreds of sites were deindexed entirely. Most had one thing in common: AI-rewritten or mass-produced content with no original insight. |

What Does Google Actually Say About AI Content?

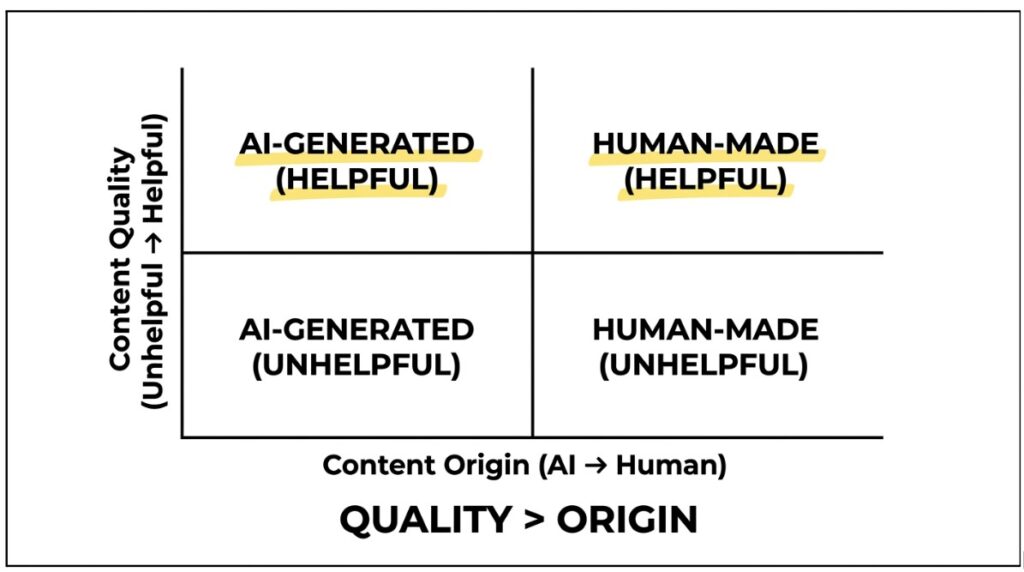

Let us start with the part that confuses most people. Google’s official position is not anti-AI. Google has explicitly stated it does not penalise content for being AI-generated. What it penalises is content that is unhelpful, unoriginal, and created primarily to manipulate rankings, regardless of how it was made.

That distinction matters enormously. Because here is what it means in practice: an AI rewrite of a competitor’s blog ticks every single one of Google’s negative boxes, while ticking almost none of its positive ones.

| Google’s own words: “Our focus is on the quality of content, rather than how content is produced.” – Google Search Central, February 2023 |

The real question, then, is not whether AI wrote it. The real question is: does this content offer anything new, accurate, and genuinely useful, or is it derivative information in a different suit?

For AI rewrites, the honest answer is almost always: derivative information in a different suit.

Read More: What Does Google Think of AI Content and 6 Best Practices for Using AI Content

Reason 1: Google Reads Meaning, Not Just Words (The Semantic Fingerprint)

This is where it gets genuinely interesting, and where most people’s mental model of “how Google works” is simply wrong.

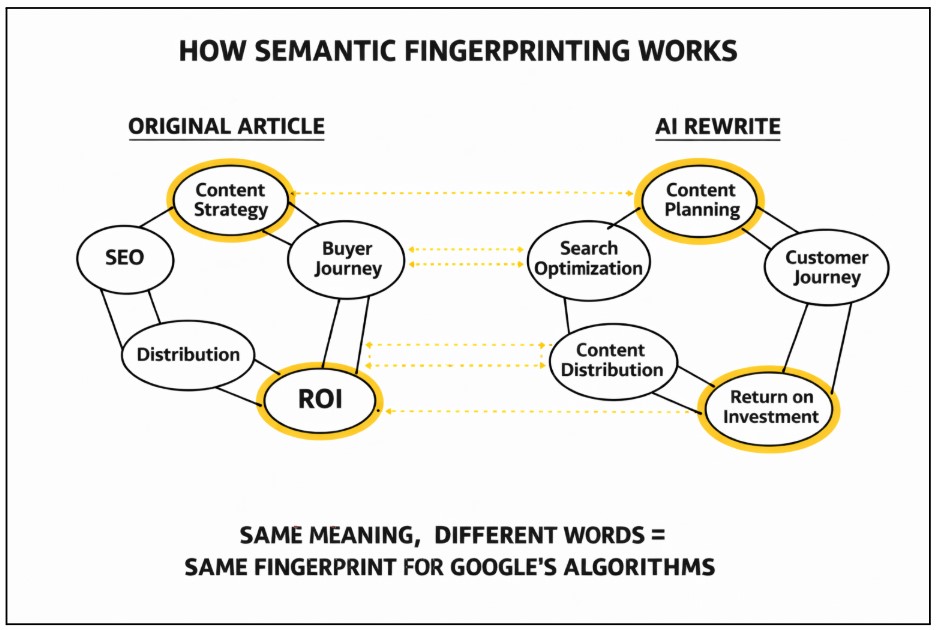

Google does not read your content like a human editor scanning for copied sentences. It converts every piece of content into a mathematical vector, called an embedding, that represents the article’s meaning, topic clusters, context, and semantic relationships. Think of it as a content fingerprint: not based on the words, but on what those words mean and how they relate to each other.

When you write about, say, “content marketing strategy,” Google’s NLP systems expect to find specific concept clusters: buyer journey, content formats, distribution channels, measurement, and ROI. When an AI rewrites an existing article, it shuffles the surface language but keeps the same concept clusters, the same information architecture, and the same semantic fingerprint.

So even though not a single sentence is copied, Google sees near-identical embeddings and flags the content as derivative.

| The key insight: SimHash, Google’s algorithm for near-duplicate detection, uses a mathematical measure called Hamming Distance to compare content fingerprints. A small Hamming Distance between two articles signals near-duplication, even when the text is entirely different on the surface. |

The result? Google finds the article it deems the original, highest-authority source (the one with the strongest E-E-A-T signals) and ranks it. The AI rewrite becomes invisible.

The only way to create a genuinely new semantic fingerprint is to introduce something the original article does not contain: original data, a unique business perspective, first-hand experience, or insights from real expertise. An AI rewording exercise cannot manufacture any of these.

| B2B SaaS websites that published original research saw an average 50% increase in top-10 keyword rankings between January 2023 and January 2024, according to a year-long analysis of 250 companies by Stratabeat. Google’s systems are specifically designed to surface new information, not new sentences about the same information. |

Earlier this year, a B2B client approached us after publishing 30+ AI-rewritten blogs over three months. Despite targeting high-volume keywords, none of the pages ranked in the top 20. When we replaced just 5 of those articles with expert-led content that included original insights and internal data, 3 of them entered the top 5 within 8 weeks. The difference was not length or optimisation; it was original perspective.

Reason 2: E-E-A-T Is Not a Checklist. It Is a Signal Google Has to Find Elsewhere.

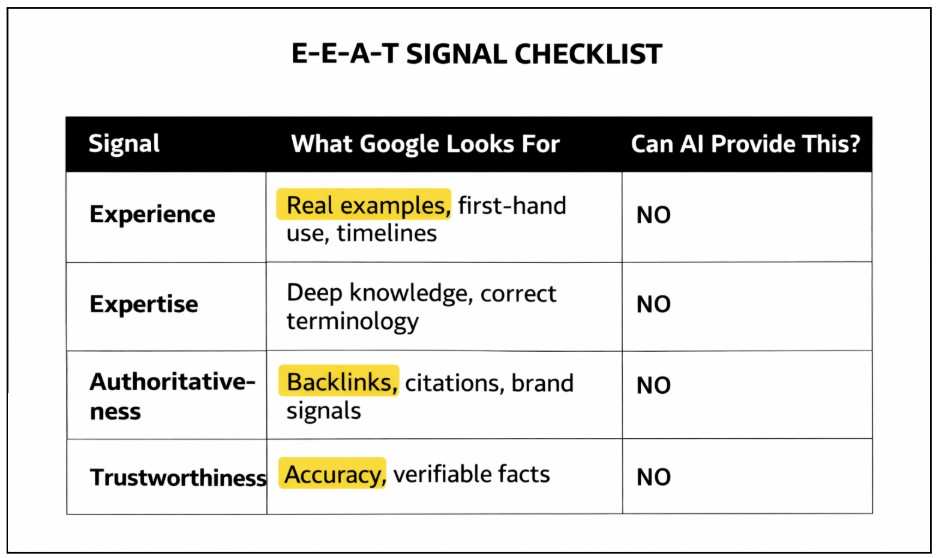

Google’s Quality Rater Guidelines, the document Google uses to train human evaluators who assess search quality, require content to demonstrate four things: Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T).

The important word in all four is ‘demonstrate.’ Google does not take your word for it. It has to find supporting evidence, and it looks in very specific places.

Experience: The Signal AI Cannot Fake

Experience signals in content include:

- First-person narratives from people who have actually done the thing

- Specific timeframes and context (“we ran this campaign for six months and here is what happened”)

- Before-and-after examples

- Screenshots of real results

- Business-specific anecdotes

An AI rewrite has none of this. It has a paraphrased theory.

Expertise: The Signal That Lives in Specificity

These are what separate ‘written by someone who knows this industry’ from ‘written by a system that has read about this industry’:

- Technical term usage

- Contextually accurate application of domain knowledge

- Expert-level distinctions between related concepts

AI models are trained to match linguistic patterns. That often produces competent-sounding summaries. It rarely produces the kind of specific, nuanced insight that a practitioner with years of experience brings.

Read More: Difference Between Experience & Expertise in Google’s E-E-A-T

Authoritativeness: A Web-Wide Signal, Not an On-Page One

Authoritativeness is not something you establish within a single article. Google looks at:

- Who is linking to your content and why

- Whether your brand is searched for directly

- Whether your authors have verified profiles and published work elsewhere

- Whether your content is cited as a reference by other trusted sources.

An AI-rewritten blog starts this race with nothing because none of these external signals exist for a derivative piece.

Trustworthiness: Where AI Hallucination Becomes an Active Liability

AI systems hallucinate. They cite sources that do not exist. They state statistics that are fabricated. They describe procedures that are technically inaccurate. Google’s systems verify factual accuracy using the Knowledge Graph and cross-reference signals, and inaccurate content actively erodes trust signals, sometimes permanently.

Reason 3: Google Does Not Evaluate Your Content in Isolation

Here is what most people miss entirely: a single blog post does not rank in isolation. Google evaluates it within the context of your entire site, your topic cluster architecture, your backlink profile, your author credentials, and your historical content quality.

When you publish an AI rewrite, Google’s systems assess:

- How many related articles on this site cover this topic cluster? (Topical authority)

- How many other sites link to this article, and are they credible? (External validation)

- How does this article connect to other pages on the same site? (Internal linking structure)

- Does the author have verifiable credentials in this domain? (Author trust)

- Has this domain historically published accurate, original content? (Domain trust)

An AI-rewritten article on a new or thin site has none of these supporting signals. The article competes not just on its own content quality, but on the entire ecosystem of credibility that surrounds it. The original article, written by a brand with years of topical authority, real backlinks, and an established author, wins on every one of these dimensions.

| The compounding problem: If more than 30% of your site’s content is detected as copied, low-quality, or without original insight, Google lowers your site-wide quality score. This affects not just the AI-rewritten pages; it drags down pages across your entire domain. |

Read More: How to Build Topical Authority in a Niche (+Real Examples)

Reason 4: Real Readers Detect Generic Content Faster Than Google Does

Even if an AI rewrite somehow cleared all the algorithmic hurdles above (which it will not), it would fail at the final gate: the actual human being who reads it.

When a reader arrives at a page that echoes what they just read somewhere else, or that fails to answer the specific question they actually had, they leave. Quickly. Google measures this. Dwell time, scroll depth, return-to-SERP rates, direct traffic, and branded search volume – these are all user behaviour signals that tell Google whether your content satisfied the reader’s intent.

Negative user signals accelerate ranking decay faster than almost any algorithmic factor. An article can rank briefly and then collapse within weeks. Simply because real readers consistently told Google the page was not worth their time.

And once that signal settles, recovery is slow and painful.

| Content | What Google Finds |

| Original article | Years of topical authority, real backlinks, author trust, internal links |

| AI rewrite | No external links, no topical depth, no author credibility, no cluster |

| What Google does | Ranks the original. Ignores or demotes the rewrite. |

Read More: Optimise your Content for Search Intent and Get high Ranking

Google’s Detection Stack: More Sophisticated Than You Think

It is worth understanding exactly what tools Google has deployed, because the scale is significant.

- SpamBrain And Other Automated Spam Systems: Google says it uses automated systems to detect spam continuously, and it explicitly names SpamBrain as its AI-based spam-prevention system that is updated to catch new spam patterns.

- Canonicalization And Near-Duplicate Clustering: Google groups pages with similar content and selects a representative canonical URL, which means a rewritten page can be treated as a duplicate variant rather than a new authority.

- Scaled Content Abuse Detection: Google’s spam policies specifically call out large-scale unoriginal content created to manipulate rankings, regardless of whether it was produced by humans, templates, or AI.

- Quality Evaluation Through E-E-A-T And Rater Feedback: Google says its automated systems look for signals aligned with Experience, Expertise, Authoritativeness, and Trustworthiness, while human quality raters help assess whether the systems are producing good results.

- Site-Wide And Page-Level Trust Signals: Google’s ranking systems use many signals at both the page and site level, including technical and page-experience factors, so weak content on a weak domain is easier to suppress than strong content on an established one.

| 100% of websites that received Google manual actions in March 2024 contained some AI-generated posts. 50% had 90–100% of their content created by AI. (Source: Originality.ai, March 2024 study) |

Even publicly available AI detectors show a detection rate ranging from around 63% to 100% for ChatGPT-generated content, depending on the tool and model. Google’s internal systems are more sophisticated still.

So, What Actually Works? The Right Way to Use AI in Content

Here is the nuance that gets lost in the debate: the problem is not AI. The problem is using AI as a replacement for human expertise, judgment, and original thinking rather than as a support tool for it.

The content workflows that consistently produce ranking content in 2026 and beyond look something like this:

- Deep brief creation: Mapping real user questions, topical gaps, keyword intent, and business-specific context before a single word is written.

- Human-led research and writing: A subject matter expert or experienced writer who actually understands the topic creates the first draft, informed by real knowledge.

- AI as an editorial aid: Used to check for coverage gaps, suggest structural improvements, improve clarity, or generate multiple options for a headline or meta description. Never used to generate primary content.

- Editorial review: A senior editor checks for factual accuracy, logical flow, brand voice, and original value before anything is published.

- SEO optimisation and publishing: Internal linking, image optimisation, structured data, and SEO signals applied as a final layer.

The key distinction: AI assists the human expert. The human expert does not become a prompt engineer for AI output.

Also Read: Should Your Business Rely on AI for Website Content?

Read More: Should Your Business Rely on AI for Website Content?

The Google Information Gain Principle: What It Really Means for Your Content

In June 2022, Google was officially granted a patent for what it calls an “Information Gain Score,” a mechanism that scores content based on how much new information it adds beyond what is already available in the search results for that query.

The implications are significant. Google’s systems are specifically designed to identify content redundancy and promote content that expands the user’s knowledge. If Google has already indexed 30 articles on “content marketing strategy” and your article says the same things in different words, it has low information gain. It does not need to exist in the SERP.

An AI rewrite, by its very nature, has near-zero information gain. It takes existing information and rearranges it. Every signal in Google’s system is oriented to surface content that adds something new.

| The takeaway for content teams: Stop asking “what are the top articles ranking for this keyword?” as the only research question. Start asking: “What does the reader still not know after reading all of these articles? What can we say that no one else has said?” That gap is where rankings live. |

FAQs: Quick Answers to Questions We Hear All the Time

Yes. Through a combination of SpamBrain, SynthID watermarking, semantic embedding analysis, SimHash near-duplicate detection, and human quality raters. It does not need to “detect AI” per se. It detects low quality, low originality, and low user satisfaction, all of which AI-rewritten content typically scores poorly on.

Google does not penalise content for being AI. It penalises content that is unhelpful, unoriginal, or manipulative regardless of creation method. The distinction is important: good AI-assisted content, refined by human expertise, can rank well. Pure AI rewrites almost always fail the quality test.

Ranking position is a snapshot. Sites can rank briefly with thin content before user signals catch up. More importantly, your competitor may have domain authority, topical depth, and backlinks your site does not. And that ecosystem is carrying the page, not just the content itself.

It varies. Some pages never rank meaningfully. Others see a brief spike and then drop within 4–12 weeks as user behaviour signals accumulate. The trajectory is almost always downward.

The Bottom Line

The idea that you can take your competitor’s best-performing content, run it through AI, and emerge as the new authority is a misunderstanding of how Google’s systems actually work at every layer.

Google does not read sentences. It reads meaning. It does not just scan a page. It evaluates an ecosystem. And it does not rank content. It ranks trust, originality, and demonstrated expertise.

No AI rewrite can manufacture any of these things. They have to be earned.

The good news is that for brands willing to invest in genuinely useful, expert-led, original content, the competitive advantage has never been greater. Most of the web is busy producing mediocrity at scale. The bar for standing out has actually lowered. Not because standards have dropped, but because so many competitors have abandoned the effort.

At Justwords, this is exactly where we spend our time: building content systems that are built on original insight, expert knowledge, and a deep understanding of what Google’s systems are actually rewarding. If your current content strategy relies on AI-rewritten content or “skyscraper” tactics alone, it is worth a conversation.

Further Reading: 8 Main Steps to Create a Content Marketing Strategy for Web